Trustworthy AI for software engineering

How can AI systems used by developers expose their assumptions, behave more predictably, and remain understandable enough to support real engineering workflows?

Research profile

My research sits at the intersection of AI-enabled developer tooling, software security, and empirical software engineering. I am especially interested in how model platforms, agents, and developer-facing AI systems fail in real settings, and how we can design better technical and process-level safeguards.

The same themes introduced on the homepage, expanded here as the lens through which I choose projects and papers.

How can AI systems used by developers expose their assumptions, behave more predictably, and remain understandable enough to support real engineering workflows?

I am interested in the failure modes created when agentic systems, model hubs, and tool-integrated LLM applications meet unsafe defaults or weak contracts.

Repository mining, developer discussion analysis, and deployment-aware evidence gathering are central to how I frame and validate the research questions.

The best current example of the kind of research problem I want to pursue in graduate school.

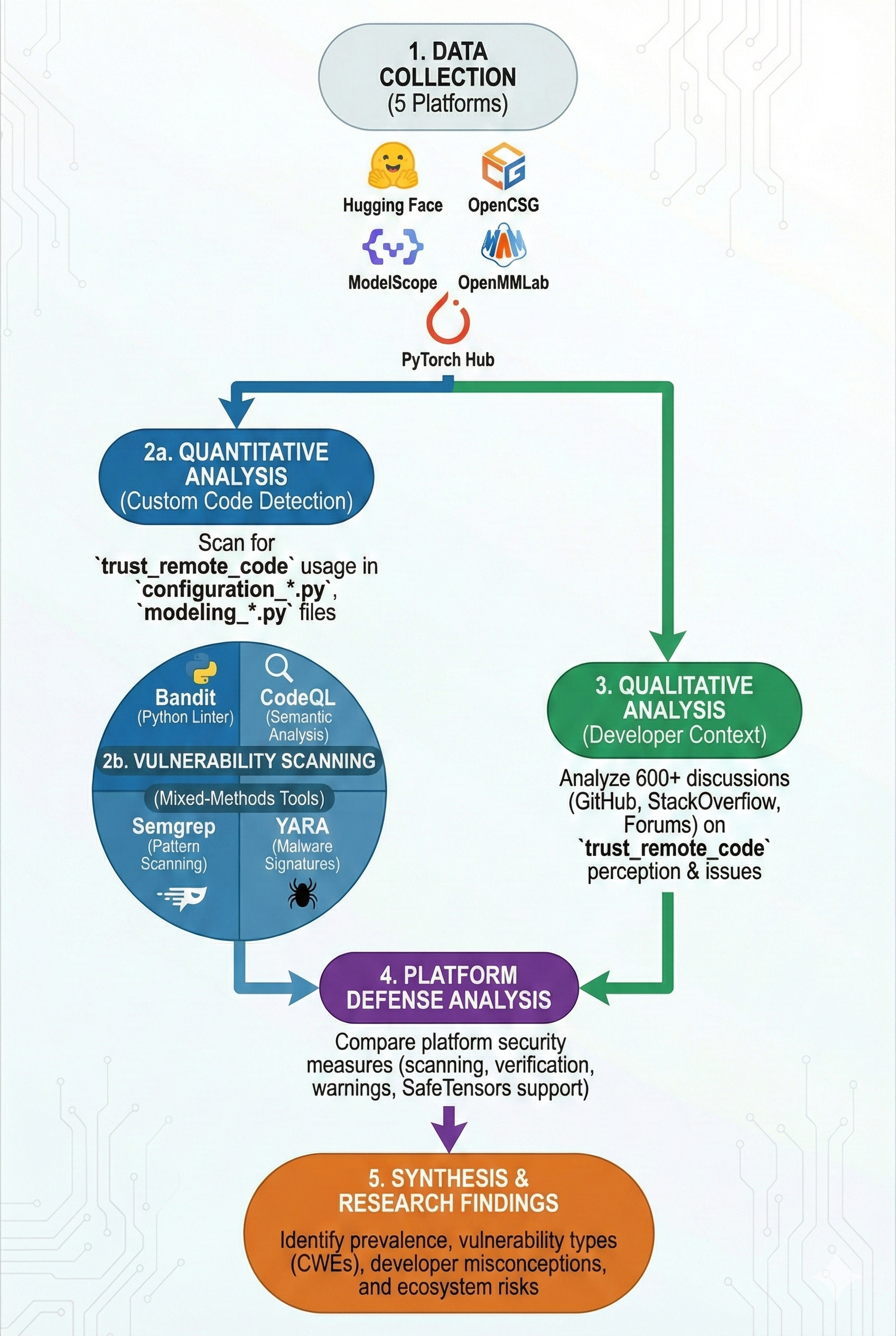

Cross-platform study of ~45,000 repositories across five ML platforms (Hugging Face, ModelScope, OpenCSG, OpenMMLab, PyTorch Hub) with co-authors Mohammad Latif Siddiq and Joanna C. Santos. Detected security issues using static analyzers (Bandit, CodeQL, Semgrep) and YARA malware signatures: found CWE-502 (unsafe deserialization) in 74.54% and CWE-95 (eval injection) in 15.02% of affected repositories; 10.41% of Hugging Face repos contain security smells. Analyzed 600+ developer discussions to build a taxonomy of security misconceptions; found 6.6% SafeTensors adoption and heavy trust_remote_code usage. Submitted to TOSEM 2026.

Each project is presented with the same amount of information so the page reads as a coherent profile instead of a mixed archive.

Endpoint-black-box auditing framework for aligned backdoors in LLM agents using counterfactual environments and discrete-choice estimation.

Extended SchemaAgent with LangGraph StateGraph architecture and 3-tier auto-repair system, reducing redundant LLM calls by 80%.

Research track

Developed uReporter -- Bangladesh's first anonymous reporting system during 2024 national crisis, analyzing 124 crowd-sourced reports using transformer models.

Research track

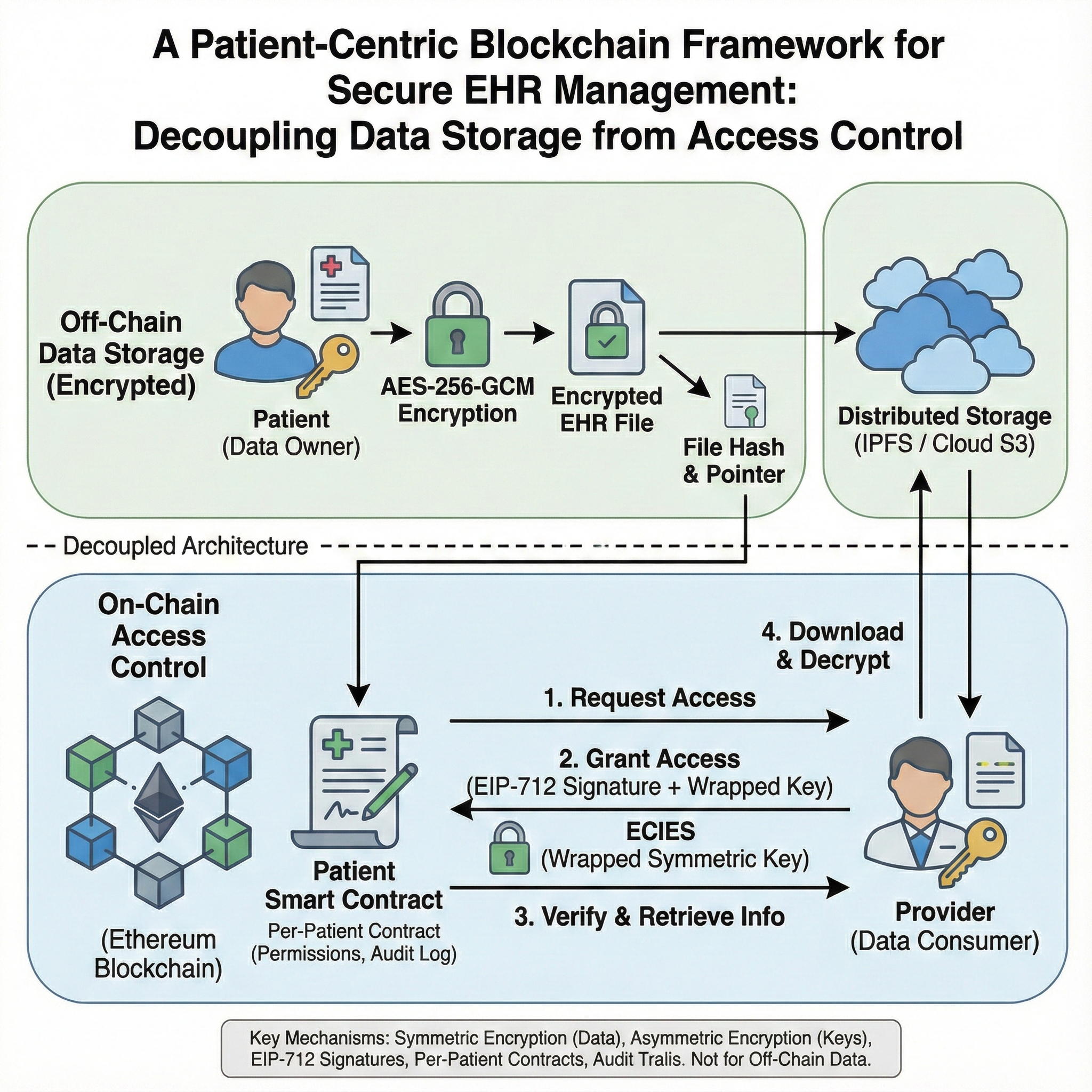

Undergraduate thesis on blockchain framework for EHR management with encrypted off-chain IPFS storage and on-chain Ethereum access control.